What “AI” Really Means in Talent Systems (and Why It Matters)

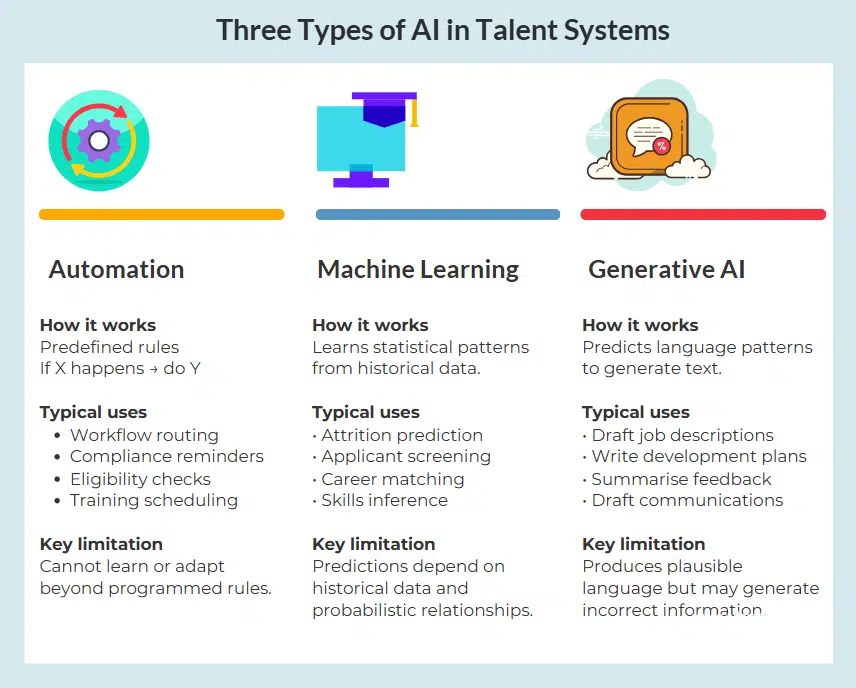

Most talent systems now claim to be “AI-powered.” The term “AI” can refer to several different technologies.

Each of these technologies relies on different types of algorithms to process information and produce results. Because the underlying mechanisms differ, the capabilities and limitations of these systems also differ.

Understanding how these technologies work helps organisations interpret AI outputs appropriately in hiring, development, and workforce decision processes.

Understanding the mechanism matters more than the marketing.

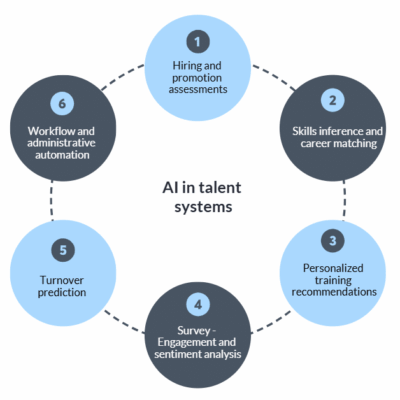

Where AI Is Being Applied in Talent Systems

AI is now promoted as supporting:

- Hiring and promotion assessments

- Skills inference and career matching

- Personalized training recommendations

- Engagement and sentiment analysis

- Attrition prediction

- Workflow and administrative automation

Some of these uses are low risk and efficiency-focused.

Others influence high-stakes decisions that affect careers and organisational outcomes.

Before evaluating value or risk, we need clarity on what type of system is actually in use.

Automation (Rule-Based Systems)

Not all “AI” learns.

Many systems described as AI are actually rule-based automation.

Automation follows predefined logic:

If X happens → do Y.

If criteria met → trigger outcome.

The output is deterministic.

The same input will always produce the same result.

Structural limitations of rule based systems

Because automation only executes predefined rules:

- It does not learn from data.

- It does not adapt over time.

- It does not infer patterns.

- It cannot recognize novel situations outside its programmed logic.

There is no training dataset involved. The output reflects only the rules that were explicitly designed.

Automation risk is therefore not about biased data or flawed prediction models. It is about:

- Oversimplified logic

- Poorly defined thresholds

- Missing edge cases

- Rigid decision pathways

- Incorrect weighting of inputs

If the rules are incomplete or poorly designed, automation will execute those flaws consistently — and at scale.

Where automation is used in talent systems

Automation works best when the process is structured, repeatable and rule-driven. Common examples include:

- Workflow routing for approvals

- Eligibility checks for promotions or certifications

- Compliance reminders for expiring qualifications

- Scheduling training or assessments

- Triggering development plans when role changes occur

- Recording completion of required activities

In these situations the decision logic is explicit and the correct outcome can be defined in advance.

Automation improves speed, consistency and auditability, but it cannot infer patterns or adapt beyond the programmed rules.

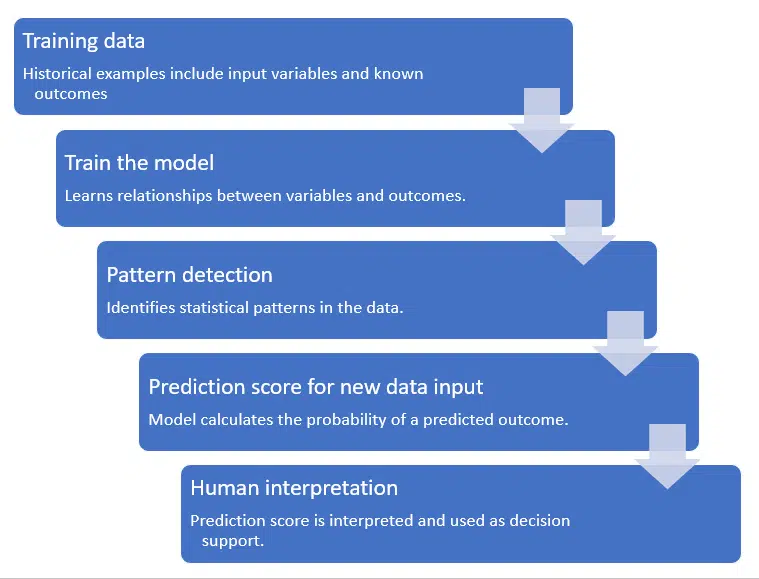

Machine Learning (Pattern-Based Prediction)

When vendors describe predictive hiring, attrition risk scoring, or performance forecasting, they are typically referring to machine learning.

Machine learning systems:

- Learn from historical data

- Identify statistical patterns

- Generate probability estimates

- Improve performance as more data becomes available

They are probabilistic, not causal.

A machine learning model does not understand why something happens.

It detects patterns that have occurred in the past and estimates the likelihood they will occur again.

Types of Machine Learning Used in HR Contexts

Supervised Learning – The model is trained on labelled historical data (e.g., past hires and their performance ratings) to predict an outcome of interest.

Unsupervised Learning – The model identifies clusters or patterns in data without predefined labels.

Natural Language Processing (NLP) – Models analyze patterns in text — such as resumes, survey comments, or written responses — based on word frequency and semantic similarity.

Machine Learning: Why Prediction in Talent Contexts Is Harder Than It Sounds

Machine learning works exceptionally well in domains such as:

- Image recognition

- Speech transcription

- Fraud detection

- Pattern classification

These domains have:

- Clear labels

- Large, structured datasets

- Measurable outcomes

- Stable patterns

Talent decisions are different.

They involve:

- Complex human behaviour

- Context-specific performance

- Subjective evaluation

- Changing organisational environments

This makes prediction materially harder.

Where Predictive Confidence Can Be Misleading

Machine learning outputs often look precise:

“Risk score: 0.82”

“Probability of attrition: 76%”

But these numbers reflect:

- Statistical likelihood

- Model assumptions

- Historical relationships

- Data quality

They do not reflect certainty.

In probabilistic systems: High confidence ≠ guaranteed correctness.

Structural Risks That Arise From How Machine Learning Works

These risks are not accidental. They are due to the nature of probabilistic pattern modelling.

Dependence on Historical Data

Machine learning learns from the past. The model will detect patterns in its training data as “success signals.”

It cannot distinguish between historical preference and true predictive factors

If historical hiring or performance data reflects:

- Biased decisions – historical hiring favored certain groups or career paths:

- Imbalanced representation of cases in the training data

- Subjective evaluation practices

The model will reproduce those patterns.

Machine learning models assume that the future will resemble the past. They will not be effective where;

Job requirements evolve

Market conditions shift

Strategy changes

New capabilities become critical

In dynamic organisations, this fragility matters.

Weak Target Measures

Machine learning requires a “target” outcome. In talent systems, this is often Job Performance for example. If that is defined by:

- Subjective manager ratings

- Inconsistent appraisal systems

- Short-term output metrics

The model learns patterns in those ratings which may not reflect true performance. If the target variable is unreliable, the prediction will also be unreliable.

A model cannot compensate for poorly defined success criteria.

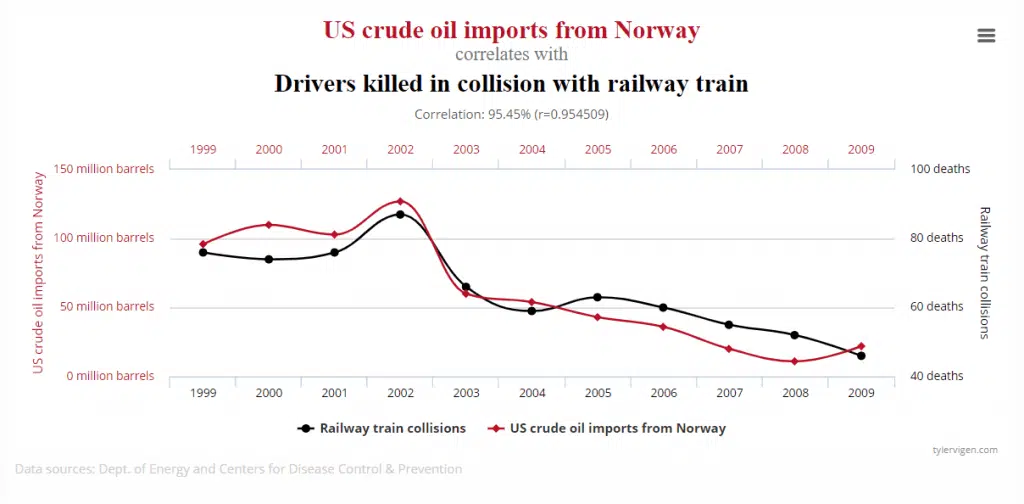

Correlation Is Not Causation

Machine learning identifies correlations.

Correlations may be:

- Coincidental – spurious

- Proxy relationships (e.g. Postcode may act as a proxy for socio-economic status)

- Influenced by hidden variables (not in data set – e.g. family responsibilities)

- Directionally ambiguous (the chicken and egg factor)

A strong statistical relationship does not prove a meaningful cause-and-effect link.

In complex social systems such as organisations, many relationships are indirect or context-specific.

Data Volume and Context Fragility

Effective machine learning requires large volumes of high-quality data.

Most organisations:

- Do not have sufficiently large internal datasets

- Do not have balanced demographic samples

- Do not have consistently measured outcomes

Machine learning models are highly context-specific.

They do not generalize well to novel situations, mergers, new job designs, or strategic shifts.

Vendor-pooled datasets may increase volume but reduce contextual relevance.

Where Machine Learning is used in Talent Systems

Machine learning is typically used when organisations want to estimate the likelihood of an outcome based on historical patterns. Examples promoted in talent systems include:

- Predicting employee attrition risk

- Identifying potential high performers

- Screening job applicants

- Inferring skills from resumes or work history

- Matching employees to potential career roles

- Detecting themes in employee feedback

These systems attempt to identify statistical relationships between characteristics and outcomes.

Because the predictions depend on historical data and probabilistic models, they should be treated as decision support rather than autonomous decisions.

Generative AI (Large Language Models)

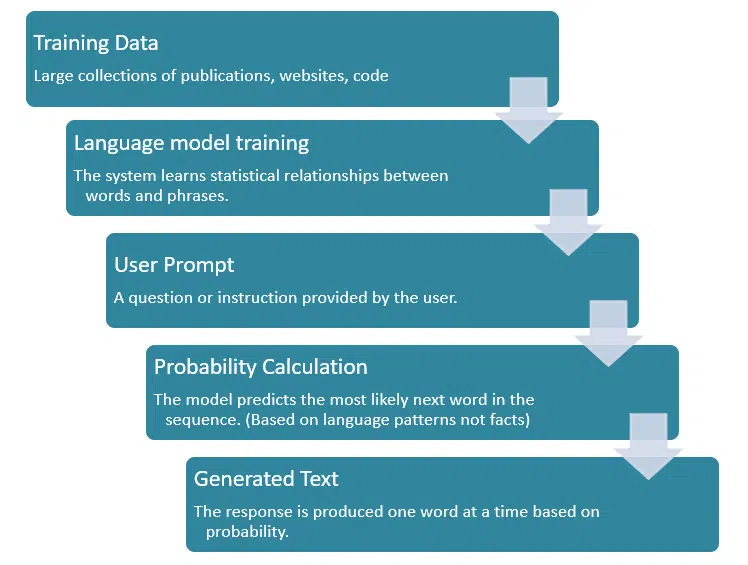

Generative AI systems — such as large language models (LLMs) — operate differently again.

They do not predict outcomes like “high performer” or “attrition risk.”

Instead, they generate text.

These systems:

Model statistical patterns in language

Predict the most probable next word in a sequence

Produce coherent, fluent output

Are non-deterministic (same prompt may produce different results)

They combine existing patterns in new ways.

They do not verify truth, understand organisational context, or possess domain expertise.

Structural Risks of Generative AI

Because generative systems optimize for plausible language:

Hallucination Risk – They may produce confident but incorrect statements.

Prompt Dependency – Output quality depends heavily on how the prompt is framed.

Inconsistent Output – Identical prompts may not produce identical results.

Over-Generalized Language – Generated content can sound authoritative but lack contextual depth.

Generative AI is powerful for drafting and summarization — but it should not be confused with validated assessment or predictive modelling.

Common Uses in Talent Systems

Generative AI is best suited to content creation and summarisation tasks.

Typical uses include:

- Drafting job descriptions

- Generating competency statements

- Writing development plan suggestions

- Summarizing 360 feedback comments

- Drafting performance review narratives

- Producing training materials

These systems are powerful writing assistants. However, their output is neither consistent or completely factual. Their output should be treated as draft content requiring human review.

What Is an Algorithm?

Different types of AI rely on different kinds of algorithms.

- Rule-based automation uses algorithms that follow predefined logic.

- Machine learning systems use algorithms that analyze historical data to detect statistical patterns and estimate probabilities.

- Generative AI systems use complex algorithms built on large language models that predict likely word sequences in order to produce text.

The type of algorithm used determines how the system behaves and what kind of output it produces.

- Some algorithms produce deterministic results — the same input always produces the same output.

- Others produce probabilistic results — the output represents a statistical likelihood rather than a guaranteed outcome.

Understanding this distinction helps explain why different AI technologies must be interpreted in different ways.

Deterministic vs Probabilistic Systems

Understanding whether a system is deterministic or probabilistic changes expectations.

- Deterministic systems: Same input → same output.

- Probabilistic systems: Same input → output expressed as likelihood.

Most HR-related predictions are probabilistic. That means: Outputs are estimates, not guarantees.

Overconfidence arises when probabilistic outputs are treated as definitive decisions.

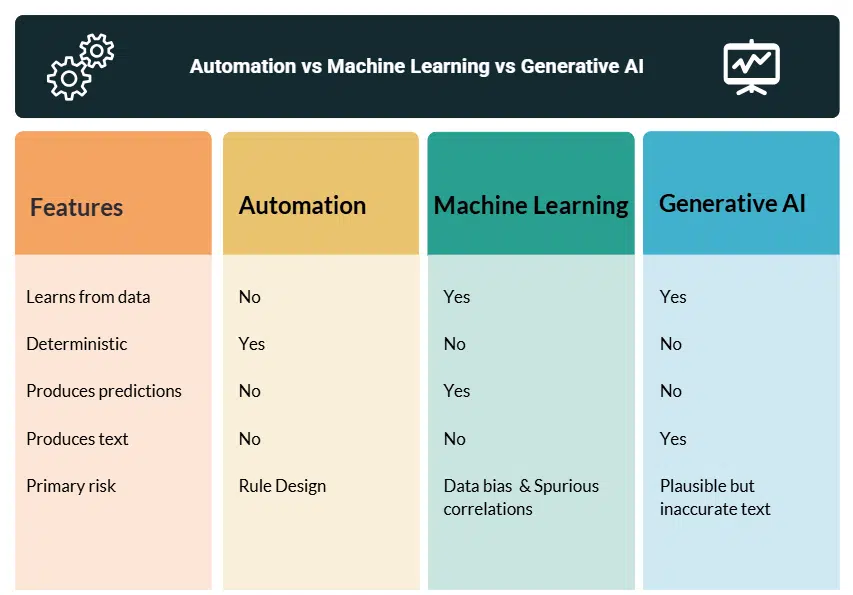

Comparing the Three Approaches

Understanding the difference between automation, machine learning, and generative AI clarifies both capability and limitation.

| Feature | Automation | Machine Learning | Generative AI |

|---|---|---|---|

| Learns from data | No | Yes | Yes |

| Requires training dataset | No | Yes | Yes (large-scale pretraining) |

| Deterministic output | Yes | No (probabilistic) | No (non-deterministic) |

| Produces text | No | No | Yes |

| Detects statistical patterns | No | Yes | Yes (in language) |

| Adapts over time | Only if manually reprogrammed | Yes (with retraining) | Yes (model updates) |

| Suitable for workflow control | ✓ | Sometimes | No |

| Suitable for predictive scoring | No | ✓ | No |

| Suitable for drafting/summarizing | No | Limited | ✓ |

| Primary risk type | Design rigidity | Data & modelling distortion | Hallucination & overconfidence |

This distinction matters. Many systems combine elements of all three.

But understanding which mechanism is driving a feature changes how its output should be interpreted.

Using AI Effectively in Talent Systems

Different AI technologies are suited to different kinds of tasks.

- Automation works best when processes are structured and rule-driven.

- Machine learning is most useful when large, reliable datasets exist and the goal is to identify statistical patterns.

- Generative AI is best suited to language-based tasks such as drafting, summarizing and structuring information.

Problems arise when organisations expect one type of system to perform tasks suited to another.

- Automation cannot infer patterns beyond its rules

- Machine learning predictions depend heavily on historical data.

- Generative AI produces plausible language but does not verify factual accuracy.

Understanding the mechanism behind each type of system helps organisations apply AI where it adds value — improving efficiency while maintaining sound judgement in decisions that affect people.

AI in HR: Key Questions

As organisations increasingly adopt AI tools across recruitment, performance management, and workforce planning, several practical questions arise about how these technologies should be interpreted and used.

The following answers address some of the most common questions about AI in talent systems.

What does “AI” actually mean in HR systems?

In most talent systems the term “AI” can refer to several different technologies.

These typically include rule-based automation, machine learning models that detect statistical patterns in data, and generative AI systems that produce text based on language models.

Because these technologies operate in different ways, their outputs should be interpreted differently. Understanding which mechanism is being used is essential before relying on the results.

What is the difference between automation and AI?

Automation follows predefined rules programmed by system designers. When specific conditions are met, the system executes a predetermined action.

Machine learning systems, by contrast, identify patterns in historical data and generate probabilistic predictions about future outcomes.

Generative AI systems produce language output based on statistical models of text.

Although these approaches are often grouped together under the label “AI,” they serve different purposes and carry different limitations.

Can AI reliably predict employee performance?

Machine learning models can identify patterns in historical data that may correlate with job performance.

However, predicting human behaviour is complex. Many organisations lack reliable measures of individual performance, and historical data may reflect past organisational conditions rather than future requirements.

As a result, predictive models are typically best used as decision-support tools rather than as fully automated decision systems.

Is generative AI used to assess employees?

Generative AI is primarily used for language-based tasks rather than assessment.

Typical uses include drafting job descriptions, summarising feedback comments, writing development plan suggestions, or assisting with documentation.

These systems generate plausible text but do not perform validated assessments of skills, capability, or job performance.

Can AI remove bias from hiring decisions?

AI systems can sometimes help standardise processes and reduce certain types of inconsistency.

However, machine learning models trained on historical data may also reproduce patterns that existed in that data. If previous hiring decisions were imbalanced or influenced by subjective criteria, those patterns may be reflected in the model’s predictions.

Careful design, validation, and oversight are therefore required when using AI-assisted decision tools.

What questions should organisations ask vendors about AI in HR systems?

Before adopting an AI-enabled talent system, organisations should clarify what type of technology is being used, what data the system relies on, how predictions are generated, and how outputs should be interpreted.

Understanding the mechanism behind the system helps ensure it is used appropriately and that its limitations are recognised.